Problem

A method must be provided to input text that is simple, natural, requires little training and can be used in any environment.

Solution

Pen interfaces can be an excellent alternative to keypads or touch screens when touch is unavailable due to environmental conditions (gloves, rain), when the device is used while on the move, or just to provide additional precision and obscure less of the screen. Pen input used to be the norm for touch-sensitive screens, when relatively low-resolution resistive was the norm; capacitive touch screens have moved to direct touch and multi-touch inputs, but pens as an add-on to touch are making a slow resurgence.

Text input is particularly suited to pen devices, especially for populations (or cultures) with little or no experience with keyboard entry, for no standard keyboard for the data type or for the above mentioned environmental conditions. In situations where only one hand may be used to type, character entry by pen can often be must faster that the use of a virtual keyboard.

Other systems, including newly-developed capacitive screens, can use specialized pens to isolate touch input, allowing the user to more naturally interact with the device by resting their hand on the screen. Mode switching is also available, either automatically or manually, to

Pen input devices may be used for multiple types of interfaces, and will often be the primary or only method for the device. This pattern covers only character and handwriting input and correction. Although any pen input panel should also support a virtual keyboard mode, this mode is covered under the Keyboards & Keypads pattern.

Variations

Pen input for text entry may fall into one of two modes:

Word entry – An area is provided where users may input gestural writing, as they would with a pen on paper. Printed characters are the most common, but script styles should also be supported. The device will use the relationships between characters to translate the writing to complete words, based on rules associated with the language, and will automatically insert spaces between words. The input language, and sometimes a special technical dictionary, must be loaded.

Character entry – The input panel is broken up into discrete spaces in which individual characters may be entered. This may be accomplished by simply dividing up the word entry area with ticks or lines, or may present a series of individual input panels. The device will translate individual characters, and will generally disregard the relationships between adjacent characters. The user is responsible for spelling words correctly, and adding space between words. The language must be set correctly for the fastest recognition, but alternatives within the language family will also be available (e.g. all accented characters for any roman language).

A subsidiary version of the character entry mode uses a shorthand, gestural character set instead. This is not required for most languages with current input and recognition technologies, but may have advantages for certain specialized applications, or for the input of special characters or functions.

All available input modes should be made available to the user at all times. The user will generally have to select which mode to use, so a method of switching must be provided. This does not preclude development of a hybrid approach, or even simply appropriately selecting which mode is most suitable to the current situation. If a gestural shorthand is available, it should be offered as a part of the natural character input method; both will be accepted, and the user may change the input method per character.

In certain cases, such as where the note taking must be as fast as possible, or the user is combining images, formulas and other data with words, a mode or application may be provided that will store the gestures without live translation. Later, the user may select all or part of the gestures to translate. This behavior should follow the pattern covered here when similarities arise, in order to preserve a consistent interface. Portions will, of course, be different.

The finger may also, on some devices, be used to input gestural writing without a pen. Many of the principles outlined in this pattern will apply, but the size of the finger may obscure the screen and make this sub-optimal.

Interaction Details

When a field that can accept character entry becomes in focus, the input panel should open to the last used mode. This mode may be a virtual keyboard, but as the modes may be switched between, the principle applies.

Whenever the user makes a gesture in the input panel, the path taken is traced as a solid line in real time, simulating pen on paper. As soon as a word (or for character entry, a character) is recognized, it should be offered as a candidate. The translated characters and words are the same as the candidate words as discussed in Autocomplete & Prediction. See that pattern for additional details on presentation, the interaction to select alternatives and on user dictionaries.

To provide sufficient space for the user to write, scrolling must be allowed for within the input panel. When the panel is filled, the system may automatically scroll, or the user may be allowed to scroll manually instead, to enable review and correction. Avoid dynamically adding additional space to the input panel, as this will just obscure more of the screen.

WHEN DONE??? WINDOWS MAKES YOU ACCEPT THE INPUT, LIVE TYPING MAKES SOME SENSE THOUGH ALSO. PRESENT BOTH.

Some provision must be made for functions which are not visible characters, such as line feeds, ok/enter, backspace and tab. These should be keys visible as part of the input panel. See the Keyboards & Keypads pattern for additional information on key placement; the handwriting area of the input panel may be considered equivalent to the character keys, in this sense.

Gestures may also be supported for the user to more quickly input key functions. However, these will require learning, so should be used as a secondary method in most cases; continue to provide keys for these functions as well. See the appropriate section for more details on gestures.

Some pens will have buttons, which the user can activate to bring up contextual menus or which may be programmed to perform other functions. These are not usually noticed so should be considered like gestures, and are only shortcuts to functions which can be found otherwise.

...Win7 gesture to insert corrections, etc.

Presentation Details

Writing is entered into an input panel that appears as a part of the screen. For small screens, this should always be a panel docked to the bottom of the viewport, and should automatically open when an input field is placed into focus. For larger screens, the input panel may be a floating area displayed contextually, adjacent to the current field requiring input. This panel must always be below the input field, to prevent obscuring the information.

For very small screens, the input panel may take up essentially the entire screen. In this case, special consideration must be taken to display the text already entered in order to provide context to the user.

When input is completed, the input panel should disappear to allow more of the page to appear.

Any delays in translation or conversion should be indicated with a Wait Indicator so the user is aware the system is still working. Besides the usual risk of the user thinking the system has hung, they may believe the phrase cannot be interpreted, and will waste time trying to correct it.

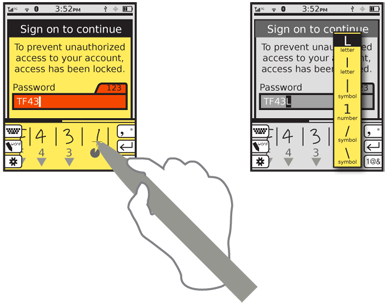

If the pen can be detected as a unique item, the input panel may be placed anywhere on the screen. Otherwise the panel must always be placed to avoid accidental input, usually along the bottom of the viewport. Resistive and most capacitive screens will perceive multiple inputs and may not be able to tell the difference between the pen and the user's hand.

Cursors must continue to be provided within any input areas on the screen, regardless of the method used. Within the handwriting input panel, a small dot or crosshair should be used to indicate the current pen position. When the pen leaves the area, this cursor must disappear.

Antipatterns

The space taken by input panel must be considered by the page display. Do not allow important information to be obscured by the panel. For example, do not make it so the panel must be closed to scroll to the bottom of a form so the submit button may be activated. Consider the input panel to be a separate area instead of an overlay so items may be scrolled into view.

Handwriting recognition can be very fast and may even be approximately as error prone as typing on a keyboard. However, correction methods and other parts of the interface can serve to make the overall experience very much slower. Use the methods described here to reduce clicks and provide automatic, predictive and otherwise easy access to mode changes.

Respect user-entered data, and do whatever is possible to preserve it. Use the principles outlined in the Cancel Protection pattern whenever possible. Especially when user input must be committed before being populated, do not allow gestures to be discarded by modal dialogues or other transient conditions.

Separate entry areas are generally no longer required technically so are not suggested unless there is some specific technical or interface reason that the screen cannot or should be used. If only one screen or portion of the screen is to be used for pen input, assure it is as optimized as possible for this task. Generally, this area may be dedicated to input, and take on all the behaviors of the input panel as described above, when in pen input mode.